"Premium HDMI Cable" Certification -- What is it, and Why?

Recently a new top tier of HDMI cable certification was announced by HDMI Licensing, who administer the spec: the "Premium HDMI Cable." We haven't seen a new bandwidth certification for a while -- not since HDMI 1.3 introduced the two-tiered "high-speed" and "standard" HDMI system -- and so naturally this new certification level, in which Blue Jeans Cable was one of the earliest participants, raises a lot of questions. What is a "Premium" HDMI Cable? Do I need this for 4K video? What does the certification mean?

And with this new certification have come, as well, a lot of skeptical remarks from people who are pretty sure that because HDMI is "all ones and zeros" it's easy to handle HDMI signalling and that these various cable certifications are a bunch of hooey designed to sell expensive cables. In addition to clearing up some of the basic questions people have, we'd like to be sure to get the truth out there about what the certification means, why it could be important, and why "it's all ones and zeros" isn't as good an answer to the whole question of digital cable quality as it might seem at first to be.

"It's All Ones and Zeros," so Cable Quality Doesn't Matter -- or Does It?

There is no more reliable theme, in discussions about HDMI cable, than the claim that "it's all ones and zeros" and that cable quality therefore doesn't actually matter. This viewpoint is simple, straightforward, and intuitively quite appealing -- after all, how hard can it be to tell ones from zeros?

This view of things has a true part and a false part (rather like a Boolean one and zero, really) and people are apt to mistake the true part for the false part and vice versa. What's true, in this understanding of digital communication, is that if (underscore: if) all of the data in a signal running through a cable get through to the destination circuit, intact and without errors getting in, no further improvement in the cable will make any difference to the quality of the result. We've been telling people this since the days when the fastest digital communication in most homes was running over a S/PDIF cable, and it's just as true now as it was then; what surprises us is that after years of spreading this gospel, we've found that it is very easy to misunderstand.

The false part of this line of thinking is embedded in the easy way people breeze through that word "if." It's a common assumption that because ones and zeros seem fairly unlike one another, it's always easy to send them down a wire, or down a bundle of wires, and recover the bitstream accurately at the other end. If you'd like to read more about this, see our article "All Ones and Zeros" (which, though written about Ethernet cable, is fully applicable to HDMI). But the quick summary of the subject is this: when you've got to send six billion ones and zeros down a pair of wires per second, electricity doesn't behave quite so simply as the "on-off" model which people usually have in mind.

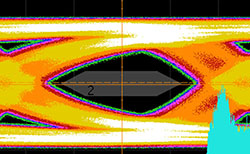

Instead of distinct "ones" and "zeros" the voltage of the signal drifts around a wide range of values many of which are closer to one half than to one or zero. The signal generates its own interference, in the form of return loss (signal reflected back down the line due to changes in characteristic impedance); the three data channels in the HDMI cable interfere with each other, in the form of crosstalk; the differential nature of the signaling, with mirror-image signals traveling on two wires, is subject to smearing over time from such things as the capacitance between those wires and small differences in their electrical lengths (bear in mind that a "bit" at 6 Gbps is about an inch and a half long in a PE dielectric cable!), so that transitions from "one" to "zero" and vice versa get blurred; and what started as a relatively clean and straightforward signal switching between two discrete values turns into a signal that can no longer be correctly read. Again, for more details, see: All Ones and Zeros.

The Need for Speed

As said above, it matters whether you're sending ones and zeros at a slow speed or at a high speed; this is because, much as we'd like to think of transitions between ones and zeros as being the sort of thing that happen instantaneously, these transitions start to look very gradual when you're chopping each second into six billion pieces and looking at what happens within each piece.

If you've ever wondered why there is such a thing as "Cat 5e" cable and "Cat 6" cable, when both of 'em just have eight little wires that each run from a pin on one plug to the same corresponding pin on another, well, it's the same thing: as frequencies rise, getting consistent performance out of your materials requires increasingly tight manufacturing tolerances and, sometimes, entirely new design considerations. The impedance variability that didn't cause trouble at 100 MHz can be awful at 250. How precisely a data cable needs to be made depends intimately upon just what you're going to try to run through it. The coaxial cable suitable for running CATV signals works fine, within its domain -- but try to run 6G SDI through it, and terrible things can happen.

No Ethernet standard in common use requires conventional twisted pairs to perform beyond 500 MHz (Cat 7A takes it to 1 GHz, but is not in widespread use), and getting those pairs to perform at such a high frequency has required Ethernet cable manufacturers to do all manner of clever things to control dimensions, manage twist rates, control pair spacing, and the like. From the beginning, it was clear that HDMI was going to demand far more from twisted pairs than the data world did. The first HDMI specification documents set the single-link maximum bandwidth exactly where it had been for HDMI's predecessor, DVI: 1.65 Gbps per data pair, for a total HDMI bandwidth of 4.95 Gbps. While frequencies don't map neatly to bitrates, for the reason that data signals aren't perfectly periodic (see our article "Tested at the Frequency" for more on this), the general rule in a simple one-zero encoding system like HDMI is that the top fundamental frequency is 1/2 the bitrate, and that good performance up to the third harmonic, where much of the "shoulder" energy of the signal is, is needed to convey that signal faithfully, so 1.65 Gbps equates to a whopping 2.475 GHz -- five times the bandwidth of Cat 6A. Despite this, it was possible to build compliant cables under the original HDMI specification out to around 40 feet, and in practice, non-compliant cables beyond that length often (though not always) worked just fine, too.

Pushing that much data down this sort of pipe seemed a bit crazy -- but it also seemed safe to suppose that the HDMI spec wouldn't carry the madness any further, especially as the original spec made reference to a dual-link, 29-pin connector that everyone expected would be deployed when more bandwidth was needed. Then along came HDMI 1.3, still using the same single-link connectors, and with it the announcement that the maximum bitrate of the signal had doubled -- to 3.4 Gbps per data channel, 10.2 Gbps total. Following the same third-harmonic rule, that calls for pairs that perform well out to 5.1 GHz -- ten times the Cat 6A limit. With HDMI 1.3 came a two-tiered cable specification, which created "Category 1" and "Category 2" cables, dubbed "Standard" and "High Speed" by HDMI Licensing. The amount of headroom in cable length, which had been substantial under 1.2, dropped -- but still, it was often possible to run the most common Category 2 signals, e.g., 1080p/60 at standard color, over distances which significantly exceeded the longest certified distances. Belden exhibited our Series-1 HDMI Cable at the National Association of Broadcasters show, running 1080p/60 for a distance of 125 feet, for example.

Well, the demand for speed was not over. Along came 4K video, and while the HDMI 1.3/1.4 speed limit could accommodate that, it could do so only at lower framerates and without added color depth. Many of us expected that when this limit was lifted, which it would have to be, we would either see some sort of signal compression, or multi-level coding, or some other method to keep the bandwidth demand on the cable down. But, when HDMI 2.0 came out, it stuck with the same simple binary encoding scheme while nearly doubling the top bitrate AGAIN -- now from 3.4 Gbps to 6.0 Gbps per channel, for a total 18.0 Gbps data rate over the three channels. But, surely, HDMI cable will be up to the challenge -- won't it?

Well, the 2.0 spec contains a little "cheat" of sorts, and that little cheat may pose some very real problems for users. The 2.0 spec doesn't update the cable quality or testing requirements in any way. Instead, the spec contains a mathematical model called the "worst cable emulator" which is supposed to represent the worst-case HDMI cable that passes HDMI 1.3/1.4 "Category 2" ("High Speed") testing. Sources and sinks (that is, receiving devices, e.g., displays) are required to perform well enough in signal delivery and recovery that a cable which matches the characteristics of the WCE will still function, and this mode of proceeding allowed HDMI Licensing not to come up with a new mandatory tier of cable testing to validate cables for 2.0.

That would all be well and good, but for one thing: it's impossible to accurately predict the high-frequency characteristics of a cable from its low-frequency characteristics, and for that reason it's generally not done. Every aspect of loss in a cable gets worse with higher frequencies, and what's more, it doesn't get worse in a nice, predictable curve. Return loss, for example, can be acceptable up to extremely high frequencies, and then have a huge spike when you go a bit higher. This occurs for a variety of reasons, but the chief among them is simply wavelength; frequency and wavelength are inverses, and so as frequency goes up, wavelength gets shorter. Tiny imperfections and periodicities in cable manufacture which made no difference when they were physically short relative to wavelength become enormous problems when they become longer relative to wavelength. And at 9 GHz (that third harmonic of the 3 GHz fundamental of a 6 Gbps signal which we've mentioned), we are dealing with some very short wavelengths indeed -- about an inch and a half in air, or an inch in a solid PE dielectric (the signal travels at nearly the speed of light in air, but only 2/3 the speed of light when the dielectric is polyethylene). Since anything a quarter-wave or longer is potentially significant in transmission-line terms, that means that cable irregularities and variabilities as short as a quarter of an inch can play a significant role in signal loss.

What Does this Mean for HDMI Cable Performance at 4K?

These days all of the buzz is about 4K, and people associate the HDMI 2.0 specification with 4K, so it's important to point something out at the outset: not all 4K video is the same. HDMI has supported 4K resolutions since 1.4, but with limits. To keep the signal below the 3.4 Gbps/channel, 10.2 Gbps total limit, a 4K signal couldn't have a high framerate, or deep color. It is still the case as of this writing (November 2015) that most 4K sources which are being run in home theater systems are HDMI 1.3/1.4 "high speed" applications, not HDMI 2.0 extended-bandwidth applications, and for these flavors of 4K, the HDMI 1.3/1.4 "high speed" testing is adequate to represent the conditions of use.

But when HDMI 2.0-type 4K is being run -- currently mostly from computer video cards -- using an extended color gamut and/or high framerate (50 or 60 fps), the 1.3/1.4 testing does NOT represent the actual conditions of use for the cable. We are, at this writing, in "early days" for HDMI 2.0, but indications so far are that the honeymoon is largely over where cable run lengths are concerned. We've had a variety of customers write to us about their experiences when running full-bandwidth 2.0 video, and what they've been finding is that most "high speed cable" does not, at least when run near its longest certified limit, stand up to the task.

It remains the case under HDMI 2.0 as before that this isn't a subtle thing; if a cable is failing, you will see the failure manifested, in the great majority of cases, as one of the following, in order of severity:

(1) "Sparkles": individual dropped-out pixels, or

(2) "Line" dropouts where a whole line of video, or the rightward portion, drops out, or

(3) Intermittently flashing or jumping picture, indicating that so much picture data is being lost that the display is losing sync, or

(4) No picture.

These types of issues are what to look for. If you don't see them, your cable is doing fine with the signal currently being run through it. The effect won't be subtle -- it's not about qualitative aspects of the image like shade detail, contrast, and the like.

HDMI Licensing acknowledges the issue of some "high speed" cable not quite being up to the task of actually handling the full 2.0 bandwidth, saying:

"Although many current High Speed HDMI Cables in the market will perform as originally expected (and support 18Gbps), some unanticipated technical characteristics of some compliant High Speed HDMI Cables that affect performance at higher speeds have been found. These cables are compliant with the Category 2 HDMI Cable requirements and perform successfully at 10.2Gbps, but may fail at 18 Gbps."

and hence, HDMI Licensing introduced a third testing tier in addition to "Category 1" (Standard) and "Category 2" (High Speed): the "Premium HDMI Cable."

So, What IS a "Premium HDMI Cable"?

The Premium HDMI Cable program really has two elements, to address two different but related problems. First, there is the testing element: in addition to passing the conventional 1.3/1.4 Category 2 cable tests, which test the cable only out to 3.4 Gbps per channel (10.2 combined), the Premium testing program tests the cable's electrical characteristics all the way out to 6.0 Gbps/channel (18 Gbps combined) so that the certification test DOES represent the actual conditions of use of the cable. If the cable passes certification, it should ALWAYS function correctly when used to connect two HDMI 2.0-compliant devices.

Second, there is an authentication element. One of the regrettable things about HDMI cable certification is that a lot of cable is sold as "high speed" when it isn't, or even when it hasn't passed any certification testing at all. People often suppose we are making this up; but we are routinely approached by Chinese manufacturers of HDMI cable who either have no certifications at all or who have no certifications for the particular cables they sell, and every year vendors of uncertified HDMI cable at the Consumer Electronics Show in Las Vegas get shut down in mid-show. And it's not always easy to know the difference between uncertified cable and the real thing, because a manufacturer may hold a certificate for one cable design, and then present that certificate as supporting documentation for a different cable entirely. For the consumer it's even worse than it is for us -- the brand name of the cable usually belongs to a company that's not an Adopter (consider, for example, that the leading brand sold in electronics shops is not an HDMI Adopter), and there simply is no way to look up the manufacturer's credentials. For this reason, Premium HDMI Cables must be certified at the particular length sold and must bear a Premium Certified Cable label -- these labels are printed by HDMI Licensing, they include a hologram and a 2D barcode to prevent counterfeiting, and each one of them is indexed back to the particular cable for which it was sold, so that nobody can simply obtain a batch of labels for one cable and apply them to something else entirely.

It remains the case that the intent of the spec is that any legitimate "high speed" HDMI cable under 1.3/1.4 should handle 2.0 signals; but only a Premium HDMI Cable has actually been tested at the full 18.0 Gbps bandwidth and proven to actually work; and only a Premium HDMI Cable bears the anti-counterfeiting sticker to ensure that the manufacturer of the cable really does hold a certificate for that particular product.

Where Does Blue Jeans Cable Fit In?

We became an HDMI Adopter in 2006,when there were only a few hundred adopters. We came to this market with American-made cable stock (though our terminations are done in China), incorporating Belden's patented bonded-pair technology. From the beginning, we have been letting our customers know that the superior impedance stability and skew characteristics of this bonded-pair HDMI cable are the best available form of future-proofing, because they provide top performance at high frequencies, to support high bitrates -- and so when the Premium HDMI Cable program was announced, we were eager to sign on despite the burden of extensive additional testing. Blue Jeans Cable was the first American company, and among the first nine companies worldwide, to join.

As of this writing, our Series-FE cable has been certified at all lengths from 1 to 15 feet. But what we have been saying about future-proofing is being shown true: while our Series-FE has been on the market since 2010, long before the 2.0 spec, and while its predecessor, made to the same standards but without the Ethernet pair, was on the market for a couple of years before that, we have not needed to change anything to bring these cables up to the Premium HDMI Cable testing standards.

If you're curious about Series-1 and 1E cables, our 23.5 AWG version: we expect that these would pass the electrical tests of the Premium HDMI Cable program but we currently do not plan to submit them for testing because we have good reason to suppose that one phase of the testing -- the repeated tight wrapping of the cables around a tube to verify performance after mis-handling -- is likely to break connections in the cable-to-connector joint. The design of the cable is identical to the Series-FE except for scale, and the 23.5 AWG conductors provide significantly lower attenuation, so we do expect Series-1 and 1E to be good for at least 20 and probably 25 feet at the full 18.0 Gbps bandwidth. It's possible that they may function just fine for somewhat longer distances in practice, but it is becoming clear that the kind of headroom we've seen in the past, where the cable is certified high-speed to 25 feet but will run some high-speed video 100 feet or more, is simply not going to be possible under 2.0.

Is This, After All, All a Bunch of Nonsense?

We've heard a few remarks to the effect that these new standards are a bunch of nonsense, designed to trick people into buying new cables at higher prices. We hope that understanding the technical and marketing problems the new standard addresses will help to clear that up. But, these sorts of suspicions being somewhat hard to quash, a few points bear mentioning:

(1) As always, it's simple to tell whether your HDMI cable is doing its job. If you're able to run signals at the highest resolution/framerate/color depth combination that you need to run, and you're not seeing the types of conspicuous defects that characterize a failed HDMI cable connection, there's no need to replace your cable. We'll never tell you otherwise. But if you are looking for a cable which has been validated out to the maximum data rates that HDMI 2.0 accommodates, we're here, and we've got it.

(2) We're also here to tell you that if you have Series-FE or Series-F2 cables sold before the arrival of our Premium HDMI Cable certifications, you do NOT need a new cable to have the benefit -- our old cables were built from the same materials and to the same standards as our Premium-certified cables.

(3) While our Series-FE cables are more expensive than standard Chinese-stock cables, this isn't a matter of our taking enormous markups; the fact is that it's very costly to have HDMI cable stock made in the USA (we believe, though there's no way to fully verify, that the bulk Series-FE is the most expensive 28 AWG HDMI cable stock made anywhere in the world), and to ship it across the ocean twice for termination. A six-foot Series-FE costs $21.75, while our Tartan Cable 28 AWG cable of the same length costs $4.40 -- but our margins on these two products are about the same. We're just as happy to sell you one as the other.

(4) HDMI Licensing is not in the business of selling expensive cable -- it doesn't sell any cable at all -- and HDMI Licensing gets the same royalty on an HDMI cable whether you spend a dollar or ten thousand dollars on that cable. The Premium Cable Program is not the invention of slick cable sellers. It's the creation of the people responsible for ensuring compliance with the HDMI specification, and it's there to address a legitimate set of problems -- both in terms of testing and in terms of product authentication -- that consumers of HDMI products face.

Questions?

As always, if you have questions about the HDMI standards, the testing specifications, product characteristics -- please drop us a note. We don't just push boxes of HDMI cable around a warehouse; we carry unique products, we are a licensed HDMI Adopter, and we have been dealing with the HDMI specifications and compliance therewith for a long time. Even if you are not our customer, we are happy to be an information resource on all things HDMI-cable-related.